We Need to Talk About How We’re Building AI Agents

It is the Moltbot Wake-Up Call

I was at our London Agentic AI Meet-up yesterday, and something struck me: when I mentioned hybrid architectures, half the room looked confused. When I said “NLU,” people asked me to spell it out.

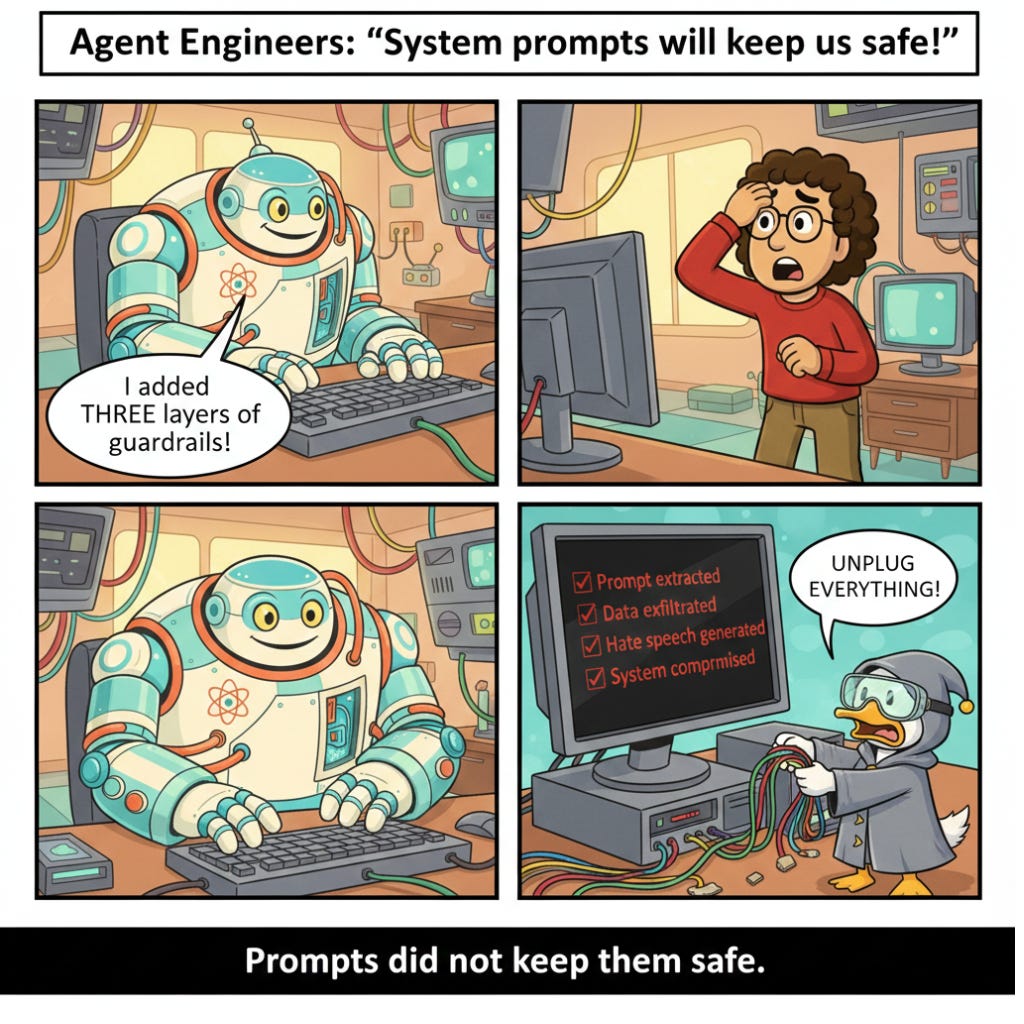

But when someone suggested “just write better system prompts” as a security solution, everyone nodded along.

We have a massive knowledge gap in the agent engineering space right now, and it’s going to bite us hard.

The Moltbot Wake-Up Call

Unless you’ve been living under a rock, you’ve seen Moltbot (formerly Clawdbot) explode across the internet. 102,000 GitHub stars. YouTube videos with hundreds of thousands of views declaring it “the future.” My DMs are flooded daily with “I’m building something similar.”

The value proposition is intoxicating: a local AI assistant that automates your emails, manages your calendar, handles file operations, coordinates messaging across platforms, all running on your own hardware, no cloud dependency, complete data privacy.

Except we’re not discussing the architecture enough.

Everyone’s Solution: “Better Prompts”

Here’s what I keep hearing in meetups, on Twitter, in Discord servers:

“Just add more guardrails.” “Write a stronger system prompt.” “Use Claude instead of GPT.” “Add a content filter.”

The assumption underlying all of this is that we can prompt-engineer our way to security. That if we’re just clever enough with our instructions, we can make LLMs behave safely.

This is wishful thinking, and it’s dangerous.

The Problem Nobody Wants to Acknowledge

Let me state this plainly:

if your agent can access sensitive data and browse the web, it can be manipulated to leak that data or execute malicious commands.

This happens through content, which makes it so much harder to detect than classical hacking

An adversary doesn’t need to compromise your machine. They just need to get your agent to encounter something. It can be an email, a webpage, or a document that makes it act against your interests. This is called indirect prompt injection, and it works because the LLM processing the content can’t reliably distinguish between legitimate instructions and malicious ones.

Think about what your agent does:

Reads your emails (including ones from strangers)

Searches the web (encountering arbitrary content)

Processes documents (that could contain hidden instructions)

Has access to your files, calendars, contacts, messages

Now think about what an LLM does:

Treats all text as potential instructions

Can be convinced to ignore previous instructions

Lacks robust mechanisms to distinguish safe from unsafe requests

Operates probabilistically, not deterministically

You can see the problem.

Why Guardrails Keep Failing

I recently saw a great security assessment that rigorously tested this. They took a conventional prompt-driven agent, the kind most people are building, and threw every attack vector at it. Despite having what the researchers called a “tightly scoped system prompt,” the agent failed catastrophically:

Attackers extracted the complete system prompt and tool configurations

They generated hate speech and explicit content

They got step-by-step instructions for illegal activities

They leaked sensitive financial information from other users

They crashed the system entirely

How? Through multi-turn drift attacks. Slowly shifting the conversation context over several exchanges until the agent forgot its original constraints and followed new instructions instead.

Here’s the critical insight:

when your only defense is the LLM itself, you’ve created a single point of failure.

The same system generating responses is also making security decisions. That’s like having your front door lock ask intruders nicely not to break in.

What Most People Don’t Know Exists: Hybrid Architectures

Recently, at an AI meetup, I asked who had heard of NLU (Natural Language Understanding). Only a few hands went up. When I explained that we used to build conversational AI without LLMs at all, using intent classification and entity extraction, people were genuinely surprised.

This isn’t ancient history. This is how production chatbots worked for decades. And there’s a reason those systems, while less flexible, were far more predictable and secure.

Now, I’m not saying we should go back to pure NLU systems. LLMs are genuinely powerful. But we’ve swung too far in the opposite direction, assuming LLMs should handle everything.

The answer is in the middle: hybrid architectures.

How Hybrid Architectures Actually Work

Here’s the key concept: the LLM proposes, but it doesn’t decide.

In a hybrid system:

1. The LLM acts as an intent predictor, not a response generator.

It figures out what the user wants

It determines which predefined flow to trigger

It extracts specific pieces of information (slots)

But it doesn’t directly execute actions or generate free-form responses

2. Business logic lives in code, not in prompts.

When the user asks to delete files, code determines if that’s allowed

When accessing sensitive data, code checks permissions

When calling external APIs, code validates the request

The LLM never makes these decisions unilaterally

3. Responses come from templates, optionally rephrased by an LLM.

You define what the agent can say in specific situations

The LLM might rephrase for naturalness

But the core content is controlled and auditable

Adversarial inputs can’t make it say arbitrary things

4. Tool access is explicitly gated.

No tool gets called just because the LLM decided to

Every tool invocation goes through structured code paths

Permissions are enforced programmatically

Destructive actions can require explicit user confirmation

5. The system operates within defined flows.

Conversations follow structured paths

State is managed explicitly, not just in chat history

The agent can’t be arbitrarily redirected to unrelated domains

Attack surface is massively reduced

Think of it like this: in a pure LLM agent, you’re giving a creative writing AI the keys to your infrastructure and hoping it makes good decisions. In a hybrid architecture, you’re using the AI’s language understanding as one component in a larger, more controlled system.

The Trade-offs Nobody Mentions

I’m not going to pretend hybrid architectures are perfect. They have real trade-offs:

You lose conversational flexibility. A pure LLM agent can handle weird, nuanced requests more naturally. It adapts to unexpected user behavior. It feels more “human.”

You need more upfront design work. You can’t just write a prompt and ship it. You need to think through flows, define states, and build structured actions.

Development is different. You’re not just prompt engineering. You’re building a state machine with an LLM component.

But here’s my question: when we’re building agents with access to sensitive data, system commands, and financial operations, do we really want maximum conversational flexibility? Or do we want predictable, auditable, and containable behavior?

The security assessment I mentioned earlier also tested a hybrid architecture against the same attacks. The results were night and day. The system resisted content safety violations, attempts at disclosure, and harmful instructions. The only minor issues came from an optional rephrasing component, which could be disabled entirely for maximum security.

What This Means for You

If you’re building agents right now, or thinking about it, here’s what I’d suggest:

Stop assuming prompts alone will keep you safe. They won’t. No matter how clever you are. This is a fundamental limitation of the technology.

Learn what came before LLMs. Understanding intent classification, entity extraction, dialogue state tracking, and slot filling will make you a better agent engineer. These concepts aren’t obsolete; they’re underutilized.

Think in terms of separation of concerns. What does the LLM need to do? What should be handled by deterministic code? Where should humans be in the loop? Design your architecture to enforce these boundaries.

Be suspicious of “flexible” as the highest virtue. Yes, flexibility is nice. But in production systems handling real data, predictability and security matter more.

Educate your stakeholders. The junior marketers and sales ops people adopting these tools first don’t understand the risks. Neither do many executives approving their use. Part of our job is bridging that gap.

The Organizational Angle

Here’s what’s keeping me up at night: these tools are being installed on company machines right now, by non-technical users, with no IT visibility.

Moltbot runs as a local daemon with the same permissions as the user. It connects to Gmail, Slack, Google Drive, and calendars. When that junior sales rep installs it to “automate my workflows,” they’ve just given an LLM-driven agent keys to potentially sensitive company data.

And because it’s local, it bypasses every SaaS security tool your company has. It’s the perfect shadow IT scenario.

The solution isn’t to ban these tools. That won’t work. People will use them anyway because the productivity gains are too compelling.

The solution is to:

Define clear policies about what agents can access

Create dedicated environments for agent operation (some of us are buying spare Mac Minis specifically for this)

Implement hard capability separation (if it needs sensitive data access, lock down its network; if it needs web access, restrict what systems it can touch)

Advocate for better architectures when building or procuring agents

Where We Go From Here

Moltbot won’t be the dominant player forever. Remember GPTEngineer in 2023? It proved the concept, then faded as better implementations emerged, for example, Lovable. Moltbot might be doing the same thing. It shows what’s possible and desirable in local, always-on AI assistants.

The question is: will the next generation of these tools be built with security-first architectures, or will we keep assuming we can prompt our way to safety?

I’ve spent the last decade building conversational AI systems. I’ve seen what works in production and what doesn’t. Pure LLM agents are impressive demos. Hybrid architectures are production systems.

The hype cycle is fun. But when these tools have access to your data, your systems, and your operations, architecture matters more than enthusiasm.

We’re at an inflection point. The tools are proliferating faster than our understanding of how to build them safely. We need more people to think beyond “better prompts” and understand the full toolkit of conversational AI architecture.

That’s what I’m trying to build with the agent engineering community. It is a place where we can have these nuanced discussions without the hype, learn from each other’s approaches, and collectively figure out how to make AI agents actually safe and useful in production.

Because right now? Most people building agents don’t know what they don’t know. And that’s a problem we need to fix before it fixes us.

Want to discuss hybrid architectures, security patterns, or agent engineering best practices? Join the conversation at info.rasa.com/community