Voice AI in 2025: What I Learned at the Rasa + Rime Event in San Francisco

Why does Voice AI still feel frustrating in 2025, despite all the progress and all the investment?

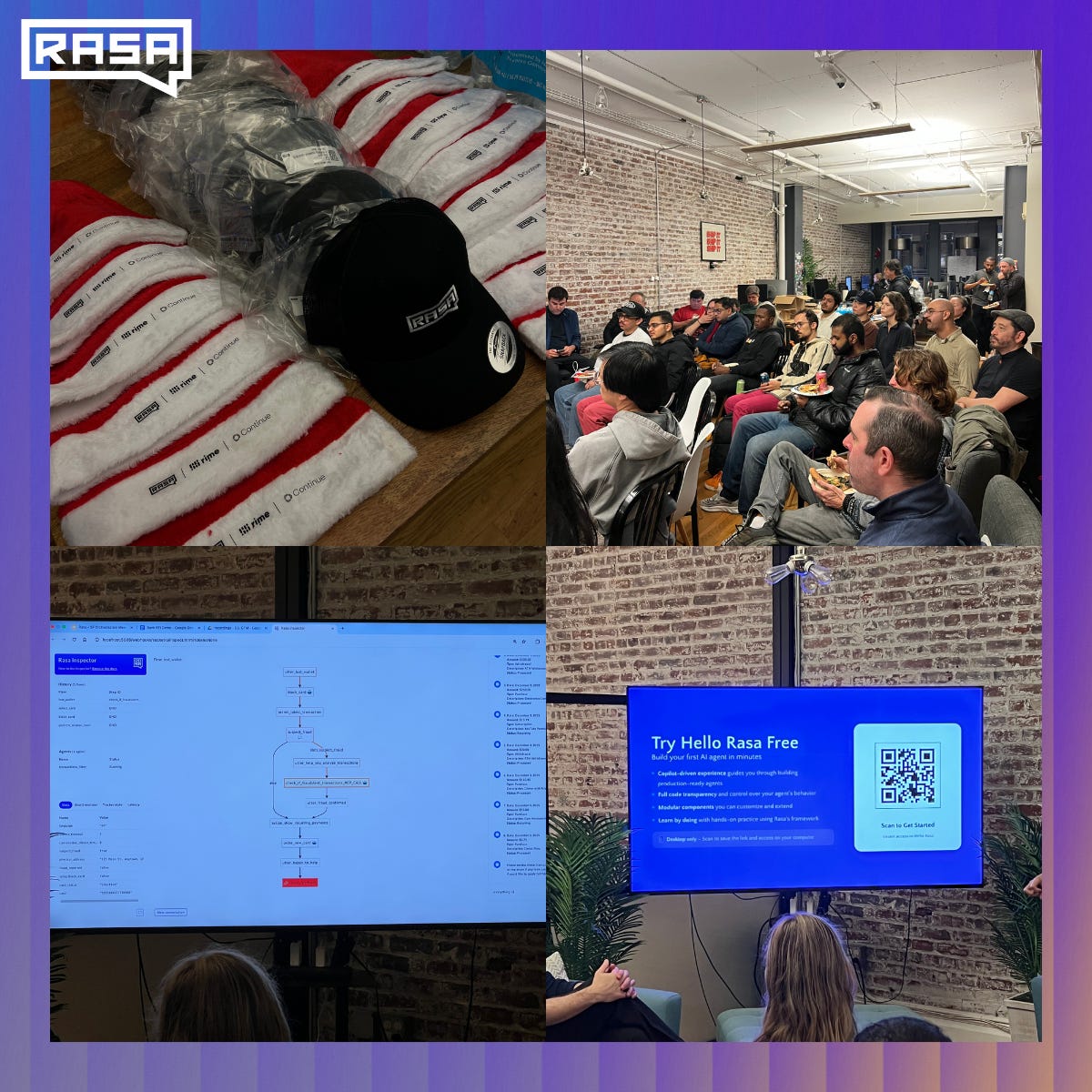

Last night, we hosted a Rasa × Rime event in San Francisco at Continue, “🎁 Unwrap the Future: Voice & Agent Orchestration with Rime + Rasa,” and focused on one question:

Why does Voice AI still feel frustrating in 2025, despite all the progress and all the investment?

The room was full of builders, engineers and founders working on real systems. We had more than 160 registrations! I spoke with a few of the attendees and their answers and thoughts around voice AI were quite honest.

This post is my synthesis of what I saw and heard, what stood out, what clicked, and what still feels unresolved.

The State of Voice AI: Adoption Is Real. Friction Is Too.

The event opened with Lily from Rime walking us through the state of the voice AI market.

She shared some interesting facts:

There are now 500-1,000 voice AI startups

Over $2B has been invested since 2024

Accuracy, latency, turn-taking, barge-in, speaker separation, hallucinations, and context handling are still active problems

Most of these have improved dramatically in the last two years. But improvement isn’t the same as delight.

One stat stuck with me: 40 to 50% of people still hang up after the first sentence of a voice agent.

This is not after the second sentence. And not after a failed task. But after the first thing the agent says.

That’s the real benchmark for anyone in the space to beat.

If Amazon lost 40% of users on page load, we wouldn’t call it “early progress”. We’d call it broken.

Latency Was the Obsession. It’s Not the Real Bottleneck Anymore.

At the end of 2024, voice AI marketing was all about speed, about having the lowest latency TTS, the fastest token streaming, and “human-like” response times.

Those things matter, but more and more, builders are realizing something more uncomfortable. Reality is that speed doesn’t fix bad conversations.

The bigger issues now for builders are:

Turn-taking that feels unnatural

No backchannels (“mm-hmm”, “yeah”, “got it”)

Rigid, form-like interaction patterns

No shared conversational context across turns

Today’s agents still behave like voice-driven forms: Question -> Answer -> Pause -> Repeat.

We, humans, don’t talk like that.

Speech-to-Speech Models: Promising, But Not a Silver Bullet

There was a lot of discussion around speech-to-speech (S2S) models.

The promise is clear: full-duplex interaction, listening and speaking at the same time, natural interruptions, better expressiveness. But the trade-offs are underappreciated.

One hard problem stood out: pronunciation accuracy.

In enterprise settings, pronunciation is much more than something cosmetic, it’s contractual.

There was a trivial example that put this in perspective: Many Americans don’t know how to correctly pronounce the restaurant chain Chipotle.

And if they don’t know the official pronunciation, how is an AI supposed to know that?

If we think this further, this becomes a massive challenge for almost any brand and product. Then, if a healthcare agent mispronounces a medication or if a bank agent misreads a product name, or a food brand hears its flagship item butchered, it is not seen as “human imperfection, ” it becomes brand damage.

Text-mediated pipelines make these issues debuggable while pure audio-to-audio models make them harder to inspect and control.

This isn’t an argument against S2S. It’s a reminder that progress is uneven and be often messy.

A Subtle but Important Point: Superhuman Is the Goal

One of the most thoughtful moments came from a simple distinction: Some human imperfections are comforting, while others are unacceptable.

There are some things that we easily forgive with speech. We forgive accents, we forgive hesitation and we forgive filler words.

But we don’t forgive incorrect facts, we don’t forgive mispronounced names and we don’t forgive errors in high-stakes flows.

Voice AI doesn’t need to sound human. However, it needs to be (significantly) better than human in the right places.

Orchestration Is the Real Infrastructure Layer

After Rime, the focus shifted to Rasa.

The presentation was not about models or about prompts. It was about orchestration.

Alex shared with us that modern enterprises don’t have only one agent. They have dozens: knowledge agents, transactional agents, compliance-bound flows, micro-agents built on small, fine-tuned models.

Protocols like MCP and A2A help standardize communication. But protocols alone don’t create systems.

We still need answers to hard questions:

Who owns the conversation state?

Who decides what happens next?

What happens when an agent fails mid-process?

How do you resume across channels after a dropped call?

This is where Rasa’s architecture shines.

The Demo: Where Theory Meets Reality

I ran a live demo combining Rasa for orchestration and Rime for voice.

The scenario was simple: a banking voice agent.

What mattered wasn’t the UI. It was the behavior:

Deterministic flows for high-risk actions (like blocking a card)

LLM-powered reasoning inside those boundaries

Seamless switching between process logic, tool calls, and knowledge lookup

Graceful handling of off-topic questions without breaking the task

This is the pattern I keep coming back to: Fluency where it’s safe and determinism where it’s required. Anything else doesn’t survive production.

All this paired with Rime’s robust, and yet very flexible, voice generation.

The audience was surprised that I was able to build this in an afternoon.

A Broader Lens: Continuous AI vs Conversational AI

One lightning talk reframed things in an interesting way. Ty from Continue shared with us his perspective on what he called Continous AI vs Conversational AI.

We often think of conversational AI as the primary interface. But many of the biggest productivity wins are happening elsewhere: in CI/CD pipelines, in internal tooling, in background automation.

This idea of “continuous AI” is worth paying attention to: agents triggered by events, instead of being triggered by prompts.

This means that we optimize around intervention rate and not around response beauty. Humans end up supervising workflows instead of chatting with bots.

The future likely blends both: Conversation as the front door and automation as the engine room.

Eventually, we’ll just call everything “AI” and stop slicing it into categories.

My Takeaway

Voice AI is no longer a model problem, it’s a systems problem.

And if we see it from the lens of systems, we have systems that manage state, systems that balance autonomy and control and systems that respect human psychology, not just benchmarks.

If you’re building in this space, my advice is simple: Aim for developing systems that survive contact with real users.

And if you are building in this space, come and join the Agent Engineering Community, where we discuss how to build these architectures and build AI agents using Hello Rasa.